The pipeline flow in the current app is intentionally split into two stages:Documentation Index

Fetch the complete documentation index at: https://docs.mantrixflow.com/llms.txt

Use this file to discover all available pages before exploring further.

- create the pipeline shell from Data Pipelines

- finish the operational setup in the builder canvas

Key routes

- List:

/workspace/data-pipelines - Create shell:

/workspace/data-pipelines/new - Builder:

/workspace/data-pipelines/[id]/builder - Detail page:

/workspace/data-pipelines/[id]

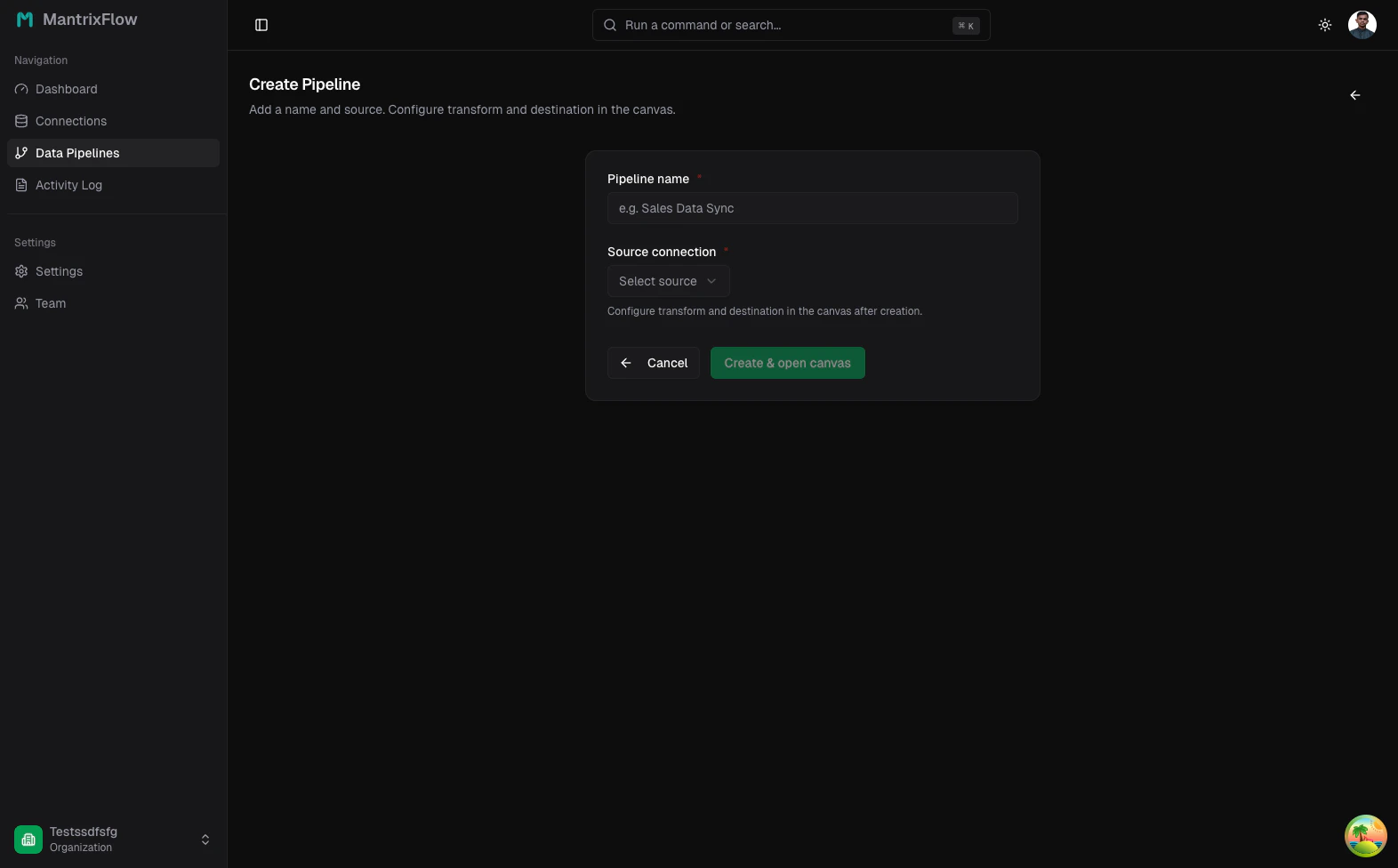

Create the pipeline shell

Start with a pipeline name and a source connection. The create page does not ask for every configuration option up front because the builder owns stream selection, transform logic, destination setup, and schedule.

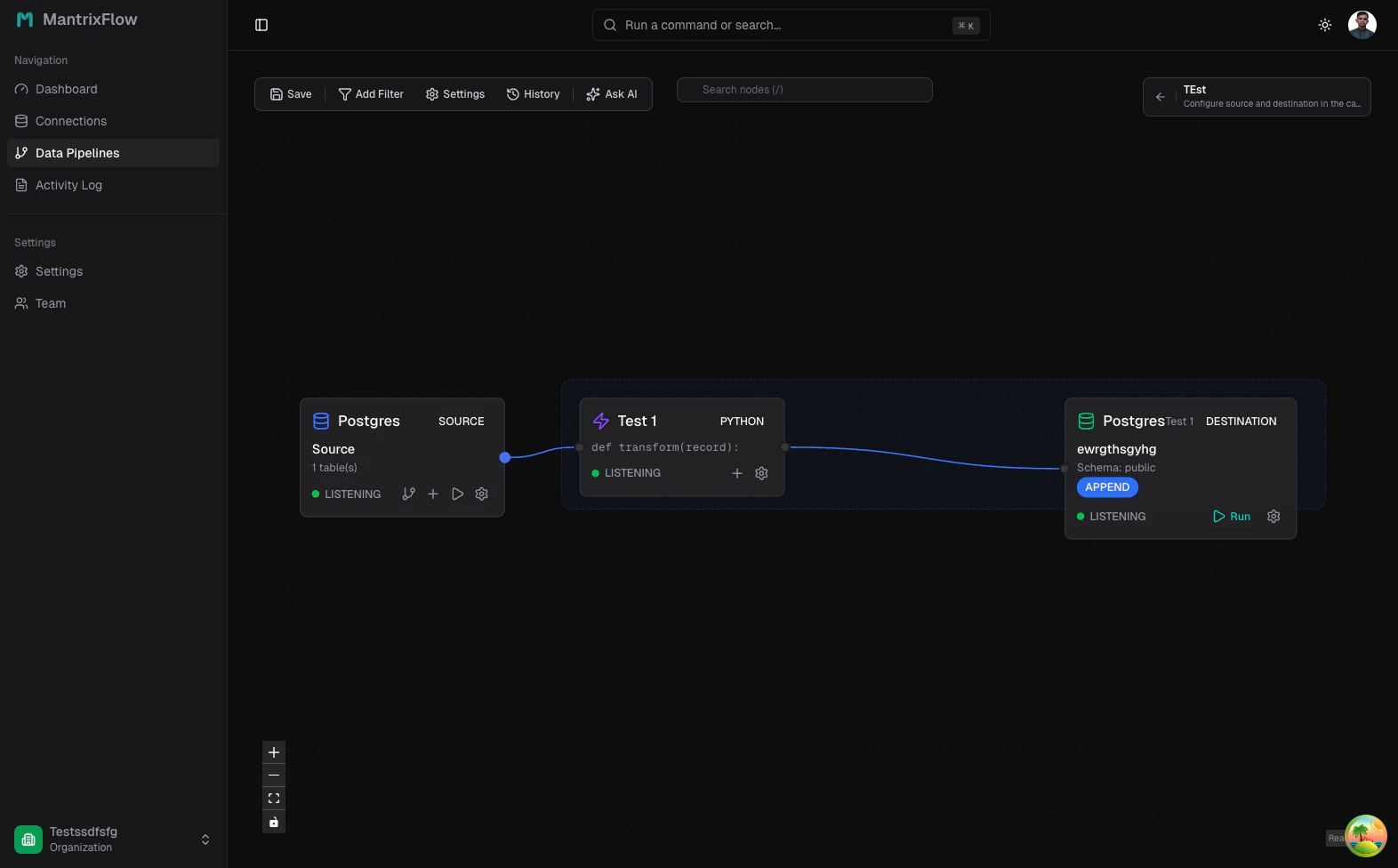

Configure the builder

The builder is the real control room for the pipeline.- Source panel (⚙️ on Source node) — choose tables, Discover schema, Preview raw rows.

- Transform node (on canvas) — SQL editor using

{{ source }}for quick reshaping. - Destination panel (⚙️ on Destination node) — 5 tabs: Config, Normalisation, dbt Layer, Preview, Scheduling.

- Action bar — save, run manually, and open settings.

The recommended first-run sequence

1. Select one source table or resource

Start with one high-value object such aspublic.orders, stripe.customers, or github.pull_requests. This keeps validation focused.2. Keep the first transform simple

Either pass records through unchanged or add one small enrichment field so debugging stays straightforward.3. Configure the destination carefully

Pick the destination connection, confirm the schema and table name, and keep the first write behavior conservative. In current builder releases, Upsert is the primary live option.4. Save before you run

Use Save in the action bar so the selected stream, transform script, destination, and schedule are persisted.5. Run manually

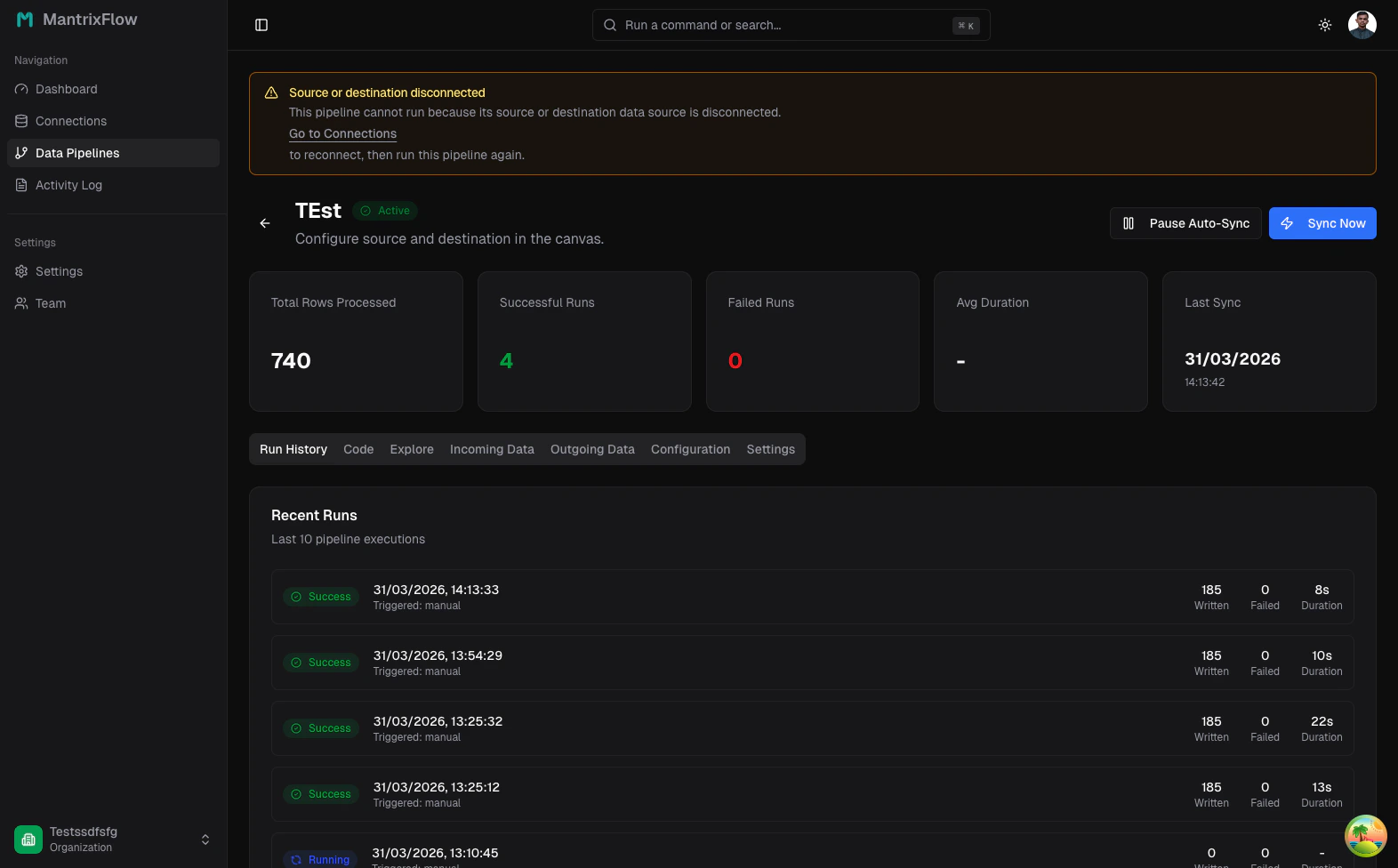

Use Run Now to validate the pipeline with operator attention before you introduce automation.Review run history

After the run, open the detail page and inspect Run History. This is where you confirm status, row counts, runtime, and whether a pipeline succeeded after a credential or schema change.

Typical reasons a run fails

- the source or destination connection was edited but not re-tested

- the selected source table no longer exists or permissions were removed

- the SQL transform or dbt model has a syntax error

- the destination schema or table name is wrong

- a schedule fired after a credential rotation

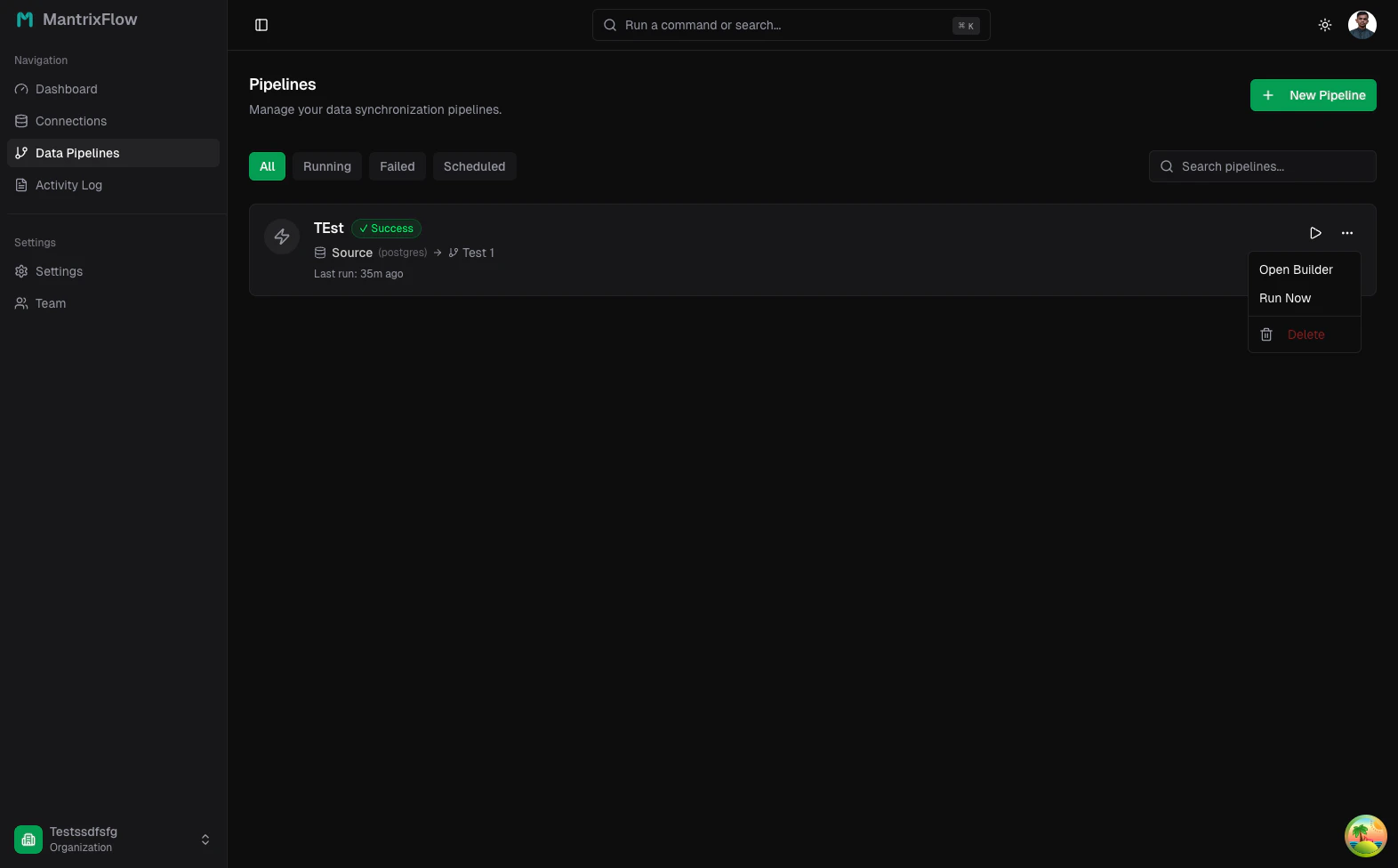

Row actions on the pipeline list

Use the list page for fast operations:- Open Builder to change the graph

- Run Now for manual validation

- Delete when you intentionally retire the pipeline

When to schedule a pipeline

Schedule only after three things are true:- the source connection tests cleanly

- the destination receives the correct shape

- at least one manual run has succeeded end to end